Conversation

…policy and wrappers

…marks cache policies

|

|

||

| StackTrace::setShowAddresses(config().getBool("show_addresses_in_stack_traces", true)); | ||

|

|

||

| const size_t buddy_arena_size = 2048 * (1ull << 20); // 2024 MB |

There was a problem hiding this comment.

(could be written more succinctly using the KiB/MiB/GiB suffix literals in base/base/units.h)

There was a problem hiding this comment.

(not urgent but it would be nice if we could ask the OS for the total available memory here and then allocate a percentual fraction of it, e.g. 90%)

There was a problem hiding this comment.

I guess l. 659, l. 660 and l. 662 should be moved into the (private) constructor of the buddy arena (which get's called once when BuddyArena::instance() is first called)

There was a problem hiding this comment.

Should we move the allocator arena parameters inside the private constructor? We can otherwise configure it using settings

|

|

||

| char * initializeMetaStorage() | ||

| { | ||

| // Calculate sizes |

There was a problem hiding this comment.

For the final version, it would be nice to add a comment that explains the layout of the meta/directory structure.

| meta_storage_size_round_up_to_power_of_2 *= 2; | ||

| } | ||

|

|

||

| /// Deallocate minimal blocks to fill the space between the meta storage and the size that is |

There was a problem hiding this comment.

I am not sure if I understand the reason for below code in this method.

There was a problem hiding this comment.

Added more detailed comments, will add more in the final version

| { | ||

| auto level = calculateLevel(size); | ||

|

|

||

| std::lock_guard lock(mutex); |

There was a problem hiding this comment.

In case the locking becomes a bottleneck, you could try our new futex-based lock implementation (ClickHouse#44924). Or, alternatively, stride the lock, i.e. introduce multiple locks (e.g. one per equally-large range of the lowest level of the buddy allocator)

There was a problem hiding this comment.

We do not have a faster std::mutex reimplementation yet, only faster std::shared_mutex (i.e. DB::SharedMutex). And I guess we won't get better performance than ordinary std::mutex in the same way.

| static void * alloc(size_t size, size_t alignment = 0) | ||

| { | ||

| checkSize(size); | ||

| CurrentMemoryTracker::alloc(size); |

There was a problem hiding this comment.

I remember our discussion that the memory tracker doesn't know about allocations at runtime using the buddy allocator (i.e. it just sees the initial allocation of the buddy allocator).

You added instrumentation for memory tracking here. Does that solve the issue?

There was a problem hiding this comment.

For now we have a hack with CurrentMemoryTracker::free right after the initial arena allocation. It should add support of memory tracker instrumentation for the project

Summary

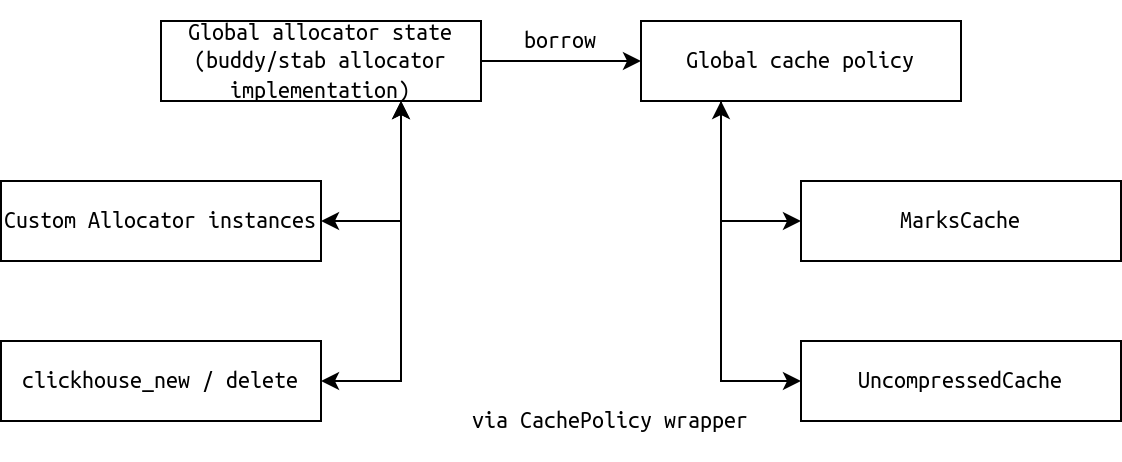

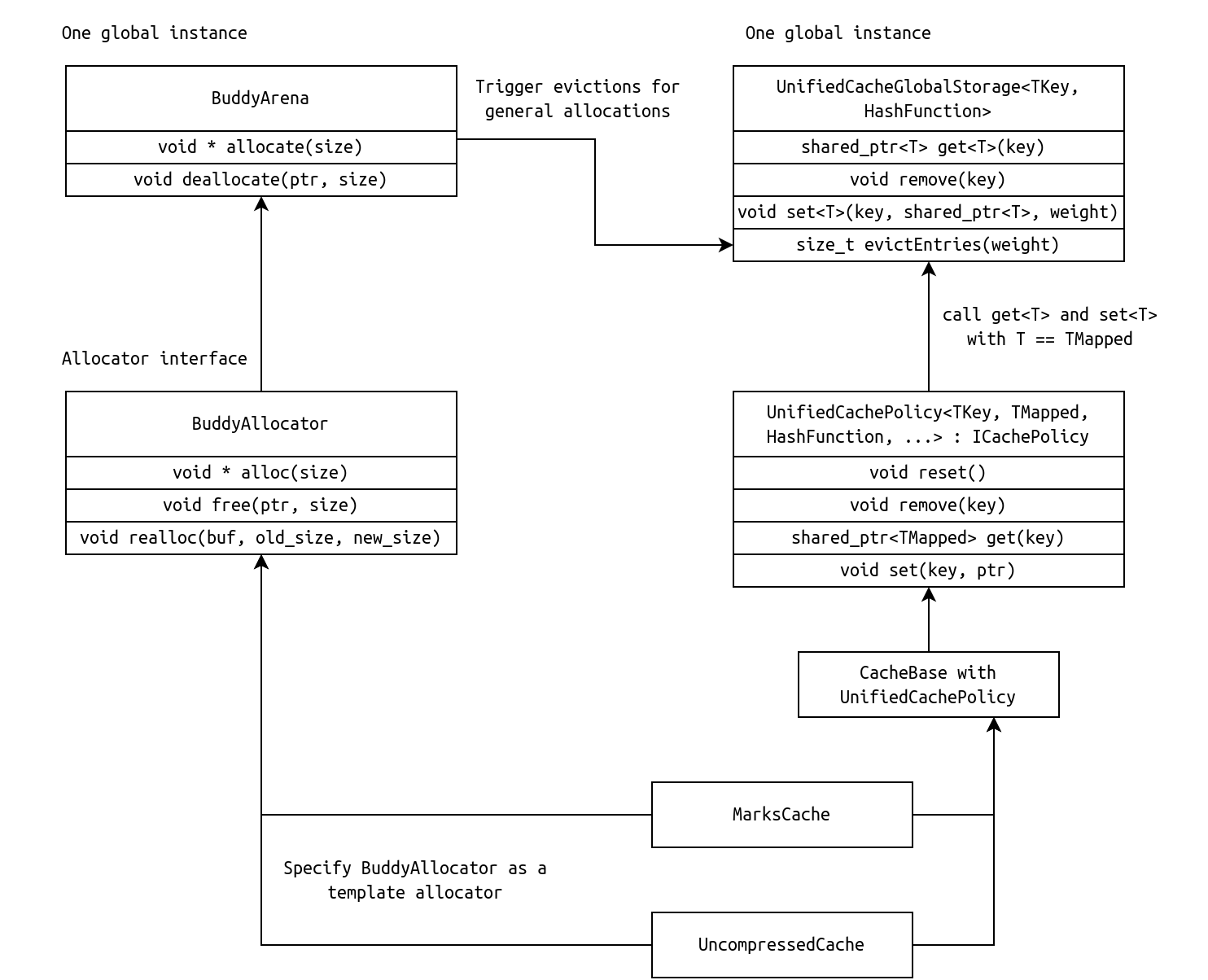

PoC of the unified cache model with new/delete integration, custom Allocator support and Uncompressed cache and Marks cache integrations.

Features

Main known problems

Implementation details

The high-level architecture of the PoC:

More specific diagram:

You can find components from the picture above in the following places:

Test plan

The main components of the allocator were tested using separate unit tests, WIP.

Benchmarks

WIP