)

@@ -588,7 +588,7 @@ When IPL (Initial Program Loader) in ROM starts to run on SCPU after power-on or

Both SCPU and NCPU firmware run RTOS with SCPU handling application, media input/output and peripheral drivers and NCPU handling CNN model pre/post processing. Two CPUs use interrupts and shared memory to achieve IPC (Inter Processor Communication).

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

-

+

P_n&space;) +

+P_n&space;) -where

-where  and

and  are the precision and recall at the nth threshold. The mAP compares the ground-truth bounding box to the detected box and returns a score. The higher the score, the more accurate the model is in its detections.

+where

are the precision and recall at the nth threshold. The mAP compares the ground-truth bounding box to the detected box and returns a score. The higher the score, the more accurate the model is in its detections.

+where  and

and  are the precision and recall at the nth threshold. The mAP compares the ground-truth bounding box to the detected box and returns a score. The higher the score, the more accurate the model is in its detections.

## Evaluation on a Dataset

For evaluating the trained model on dataset:

diff --git a/docs/model_training/object_detection_yolov5.md b/docs/model_training/object_detection_yolov5.md

index c2fde03..9090d79 100644

--- a/docs/model_training/object_detection_yolov5.md

+++ b/docs/model_training/object_detection_yolov5.md

@@ -41,12 +41,12 @@ After using a tool like [CVAT](https://github.com/openvinotoolkit/cvat), [makese

- Class numbers are zero-indexed (start from 0).

are the precision and recall at the nth threshold. The mAP compares the ground-truth bounding box to the detected box and returns a score. The higher the score, the more accurate the model is in its detections.

## Evaluation on a Dataset

For evaluating the trained model on dataset:

diff --git a/docs/model_training/object_detection_yolov5.md b/docs/model_training/object_detection_yolov5.md

index c2fde03..9090d79 100644

--- a/docs/model_training/object_detection_yolov5.md

+++ b/docs/model_training/object_detection_yolov5.md

@@ -41,12 +41,12 @@ After using a tool like [CVAT](https://github.com/openvinotoolkit/cvat), [makese

- Class numbers are zero-indexed (start from 0).

-

+

-

+

P_n&space;) +

+P_n&space;) -where

-where  and

and  are the precision and recall at the nth threshold. The mAP compares the ground-truth bounding box to the detected box and returns a score. The higher the score, the more accurate the model is in its detections.

+where

are the precision and recall at the nth threshold. The mAP compares the ground-truth bounding box to the detected box and returns a score. The higher the score, the more accurate the model is in its detections.

+where  and

and  are the precision and recall at the nth threshold. The mAP compares the ground-truth bounding box to the detected box and returns a score. The higher the score, the more accurate the model is in its detections.

## Evaluation on a Dataset

diff --git a/docs/toolchain/appendix/app_flow_manual.md b/docs/toolchain/appendix/app_flow_manual.md

index 4c47ff8..7b4b426 100644

--- a/docs/toolchain/appendix/app_flow_manual.md

+++ b/docs/toolchain/appendix/app_flow_manual.md

@@ -91,7 +91,7 @@ The memory layout for the output node data after CSIM inference is different bet

Let's look at an example where c = 4, h = 12, and w = 12. Indexing starts at 0 for this example.

are the precision and recall at the nth threshold. The mAP compares the ground-truth bounding box to the detected box and returns a score. The higher the score, the more accurate the model is in its detections.

## Evaluation on a Dataset

diff --git a/docs/toolchain/appendix/app_flow_manual.md b/docs/toolchain/appendix/app_flow_manual.md

index 4c47ff8..7b4b426 100644

--- a/docs/toolchain/appendix/app_flow_manual.md

+++ b/docs/toolchain/appendix/app_flow_manual.md

@@ -91,7 +91,7 @@ The memory layout for the output node data after CSIM inference is different bet

Let's look at an example where c = 4, h = 12, and w = 12. Indexing starts at 0 for this example.

-

+

Memory layouts

-

+

Figure 4. Pre-edited model

-

+

Figure 1. Summary for platform 520, mode 0 (ip evaluator only)

-

+

Figure 2. Summary for platform 530, mode 0 (ip evaluator only)

-

+

Figure 3. Summary for platform 520, mode 1 (with fix model generated)

-

+

Figure 4. Summary for platform 730, model 2 (with fix model generated and snr check.)

-

+

Figure 5. Node details for platform 520, mode 0 (ip evaluator only).

-

+

Figure 6. Node details for platform 530, mode 0 (ip evaluator only).

-

+

Figure 7. Node details for platform 520, mode 1 (with fix model generated).

-

+

Figure 8. NOde details for platform 730, mode 2 (with fix model generated and snr check).

+

+ Notes:

diff --git a/docs/toolchain/appendix/yolo_example.md b/docs/toolchain/appendix/yolo_example.md

index 5f6f40d..cedbf97 100644

--- a/docs/toolchain/appendix/yolo_example.md

+++ b/docs/toolchain/appendix/yolo_example.md

@@ -95,7 +95,7 @@ Now, we go through all toolchain flow by KTC (Kneron Toolchain) using the Python

* Run "python" or 'ipython'to open to Python shell:

Notes:

diff --git a/docs/toolchain/appendix/yolo_example.md b/docs/toolchain/appendix/yolo_example.md

index 5f6f40d..cedbf97 100644

--- a/docs/toolchain/appendix/yolo_example.md

+++ b/docs/toolchain/appendix/yolo_example.md

@@ -95,7 +95,7 @@ Now, we go through all toolchain flow by KTC (Kneron Toolchain) using the Python

* Run "python" or 'ipython'to open to Python shell:

-

+

Figure 1. python shell

-

+

Figure 2. modify input image in example

-

+

Figure 3. modify normalization method in example

-

+

Figure 4. detection result

-

+

Figure 1. python shell

-

+

-

+

Figure 1. Diagram of working flow

-

+

Figure FAQ3.1 VirtualBox

-

+

Figure FAQ3.2 VM status

-

+

Figure FAQ3.3 VM shutdown

-

+

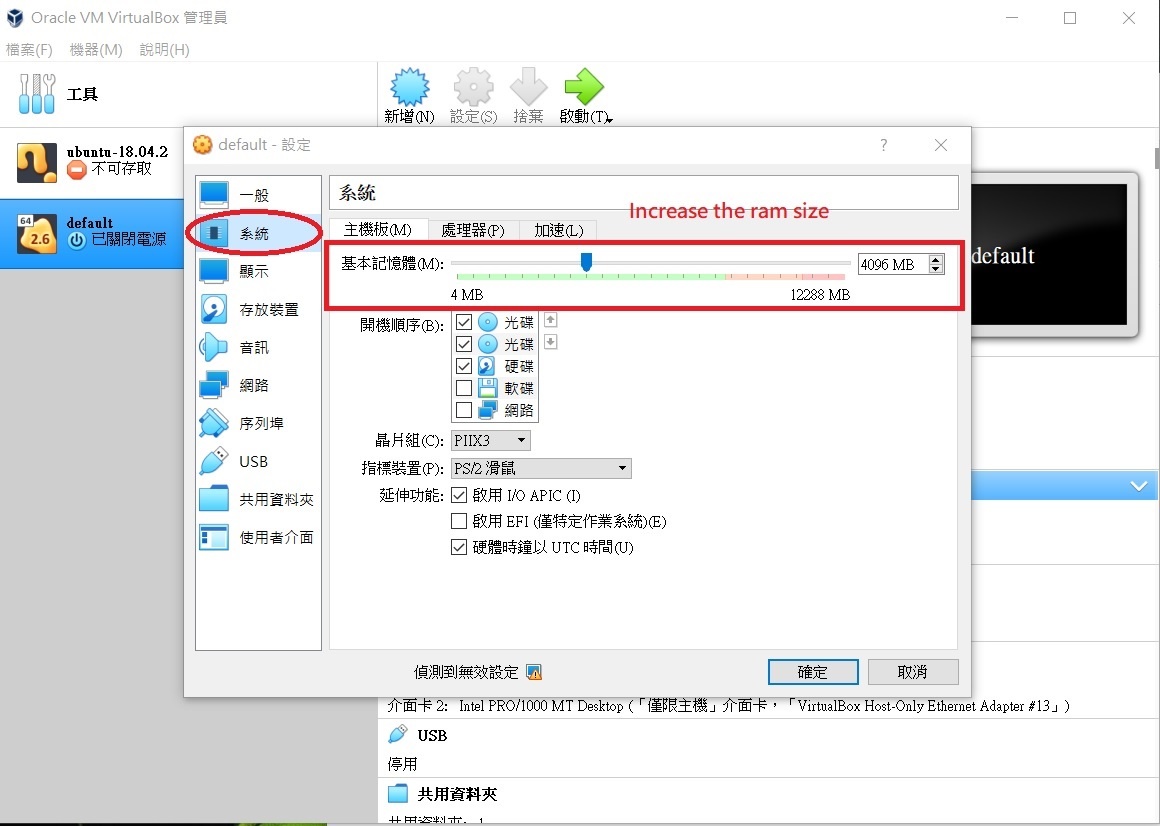

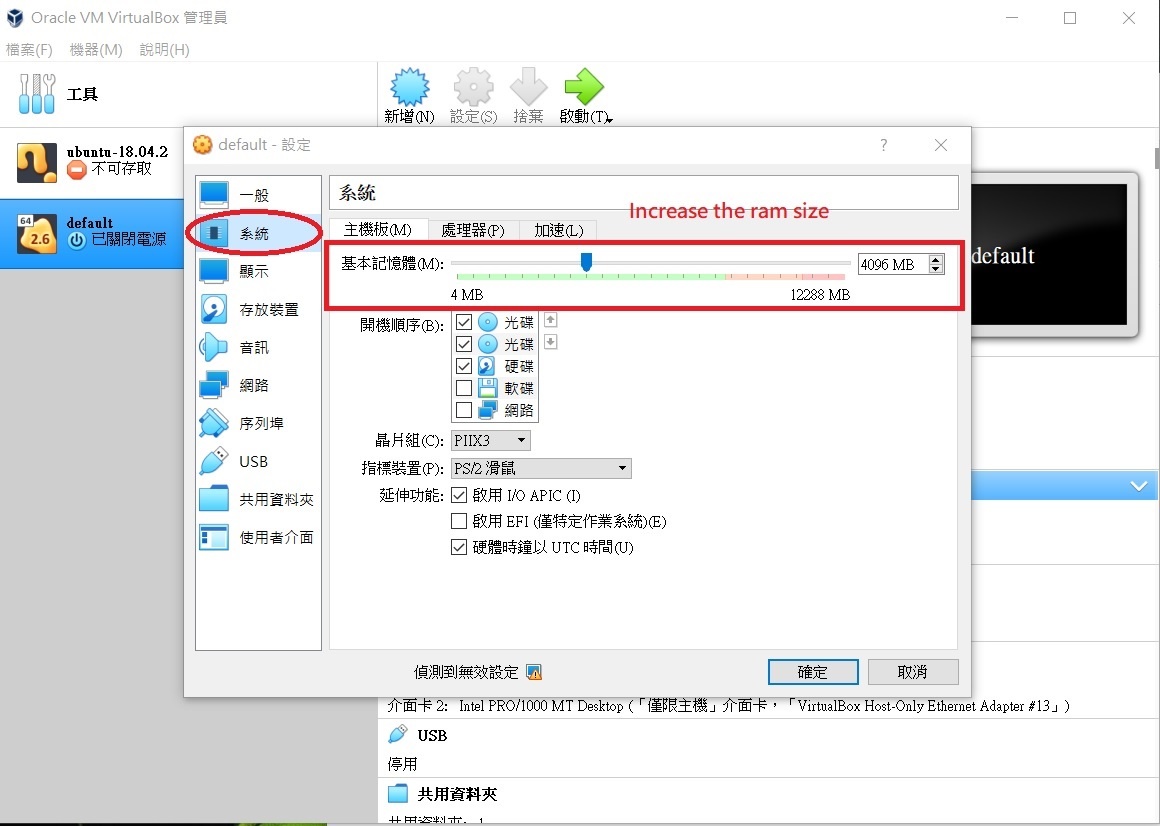

Figure FAQ3.4 VM settings

-

+

Figure 1. PTQ Chart

-

+

Figure 1. SNR-FPS Chart

-

+

Figure 2. Sentivity Analysis

+

+

+

+ Notes:

diff --git a/docs/toolchain/appendix/yolo_example.md b/docs/toolchain/appendix/yolo_example.md

index 5f6f40d..cedbf97 100644

--- a/docs/toolchain/appendix/yolo_example.md

+++ b/docs/toolchain/appendix/yolo_example.md

@@ -95,7 +95,7 @@ Now, we go through all toolchain flow by KTC (Kneron Toolchain) using the Python

* Run "python" or 'ipython'to open to Python shell:

@@ -381,14 +381,14 @@ We leverage the provided the example code in Kneron PLUS to run our YOLO NEF.

2. Modify `kneron_plus/python/example/KL720DemoGenericInferencePostYolo.py` line 20. Change input image from "bike_cars_street_224x224.bmp" to "bike_cars_street_416x416.bmp"

3. Modify line 105. change normaization method in preprocess config from "Kneron" mode to "Yolo" mode

@@ -402,7 +402,7 @@ We leverage the provided the example code in Kneron PLUS to run our YOLO NEF.

Then, you should see the YOLO NEF detection result is saved to "./output_bike_cars_street_416x416.bmp" :

diff --git a/docs/toolchain/appendix/yolo_example_InModelPreproc_trick.md b/docs/toolchain/appendix/yolo_example_InModelPreproc_trick.md

index 44ee125..57af7bd 100644

--- a/docs/toolchain/appendix/yolo_example_InModelPreproc_trick.md

+++ b/docs/toolchain/appendix/yolo_example_InModelPreproc_trick.md

@@ -72,7 +72,7 @@ Now, we go through all toolchain flow by KTC (Kneron Toolchain) using the Python

* Run "python" to open to Python shell:

diff --git a/docs/toolchain/manual_1_overview.md b/docs/toolchain/manual_1_overview.md

index 50ec9d0..06898d2 100644

--- a/docs/toolchain/manual_1_overview.md

+++ b/docs/toolchain/manual_1_overview.md

@@ -1,5 +1,5 @@

# 1. Toolchain Overview

@@ -39,7 +39,7 @@ In the following parts of this page, you can go through the basic toolchain work

Below is a breif diagram showing the workflow of how to generate the binary from a floating-point model using the toolchain.

diff --git a/docs/toolchain/manual_2_deploy.md b/docs/toolchain/manual_2_deploy.md

index bc7d5a4..1558483 100644

--- a/docs/toolchain/manual_2_deploy.md

+++ b/docs/toolchain/manual_2_deploy.md

@@ -105,28 +105,28 @@ For the docker toolbox, it is actually based on the VirtualBox virtual machine.

* Open the VirtualBox management tool.

* Check the status. There should be only one virtual machine running if there is no other virtual machines started manually by the user.

* Close the docker terminal and shutdown the virtual machine before we adjust the resources usage.

* Adjust the memory usage in the virtual machine settings. You can also change the cpu count here as well.

diff --git a/docs/toolchain/quantization/1.1_Introdution_to_Post-training_Quantization.md b/docs/toolchain/quantization/1.1_Introdution_to_Post-training_Quantization.md

index f160dcd..84013eb 100644

--- a/docs/toolchain/quantization/1.1_Introdution_to_Post-training_Quantization.md

+++ b/docs/toolchain/quantization/1.1_Introdution_to_Post-training_Quantization.md

@@ -3,6 +3,6 @@

Post-training quantization(PTQ) uses a batch of calibration data to calibrate the trained model, and directly converts the trained FP32 model into a fixed-point computing model without any training on the original model. The quantization process can be completed by only adjusting a few hyperparameters, and the process is simple and fast without training. Therefore, this method has been widely used in a large number of device-side and cloud-side deployment scenarios. We recommend that you try the PTQ method to see if it meets the requirements.

diff --git a/docs/toolchain/quantization/1.3_Optimizing_Quantization_Modes.md b/docs/toolchain/quantization/1.3_Optimizing_Quantization_Modes.md

index 2700f25..b1e4818 100644

--- a/docs/toolchain/quantization/1.3_Optimizing_Quantization_Modes.md

+++ b/docs/toolchain/quantization/1.3_Optimizing_Quantization_Modes.md

@@ -112,7 +112,7 @@ bie_path = km.analysis(

@@ -134,7 +134,7 @@ export MIXBW_DEBUG=True

Notes:

diff --git a/docs/toolchain/appendix/yolo_example.md b/docs/toolchain/appendix/yolo_example.md

index 5f6f40d..cedbf97 100644

--- a/docs/toolchain/appendix/yolo_example.md

+++ b/docs/toolchain/appendix/yolo_example.md

@@ -95,7 +95,7 @@ Now, we go through all toolchain flow by KTC (Kneron Toolchain) using the Python

* Run "python" or 'ipython'to open to Python shell:

@@ -381,14 +381,14 @@ We leverage the provided the example code in Kneron PLUS to run our YOLO NEF.

2. Modify `kneron_plus/python/example/KL720DemoGenericInferencePostYolo.py` line 20. Change input image from "bike_cars_street_224x224.bmp" to "bike_cars_street_416x416.bmp"

3. Modify line 105. change normaization method in preprocess config from "Kneron" mode to "Yolo" mode

@@ -402,7 +402,7 @@ We leverage the provided the example code in Kneron PLUS to run our YOLO NEF.

Then, you should see the YOLO NEF detection result is saved to "./output_bike_cars_street_416x416.bmp" :

diff --git a/docs/toolchain/appendix/yolo_example_InModelPreproc_trick.md b/docs/toolchain/appendix/yolo_example_InModelPreproc_trick.md

index 44ee125..57af7bd 100644

--- a/docs/toolchain/appendix/yolo_example_InModelPreproc_trick.md

+++ b/docs/toolchain/appendix/yolo_example_InModelPreproc_trick.md

@@ -72,7 +72,7 @@ Now, we go through all toolchain flow by KTC (Kneron Toolchain) using the Python

* Run "python" to open to Python shell:

diff --git a/docs/toolchain/manual_1_overview.md b/docs/toolchain/manual_1_overview.md

index 50ec9d0..06898d2 100644

--- a/docs/toolchain/manual_1_overview.md

+++ b/docs/toolchain/manual_1_overview.md

@@ -1,5 +1,5 @@

# 1. Toolchain Overview

@@ -39,7 +39,7 @@ In the following parts of this page, you can go through the basic toolchain work

Below is a breif diagram showing the workflow of how to generate the binary from a floating-point model using the toolchain.

diff --git a/docs/toolchain/manual_2_deploy.md b/docs/toolchain/manual_2_deploy.md

index bc7d5a4..1558483 100644

--- a/docs/toolchain/manual_2_deploy.md

+++ b/docs/toolchain/manual_2_deploy.md

@@ -105,28 +105,28 @@ For the docker toolbox, it is actually based on the VirtualBox virtual machine.

* Open the VirtualBox management tool.

* Check the status. There should be only one virtual machine running if there is no other virtual machines started manually by the user.

* Close the docker terminal and shutdown the virtual machine before we adjust the resources usage.

* Adjust the memory usage in the virtual machine settings. You can also change the cpu count here as well.

diff --git a/docs/toolchain/quantization/1.1_Introdution_to_Post-training_Quantization.md b/docs/toolchain/quantization/1.1_Introdution_to_Post-training_Quantization.md

index f160dcd..84013eb 100644

--- a/docs/toolchain/quantization/1.1_Introdution_to_Post-training_Quantization.md

+++ b/docs/toolchain/quantization/1.1_Introdution_to_Post-training_Quantization.md

@@ -3,6 +3,6 @@

Post-training quantization(PTQ) uses a batch of calibration data to calibrate the trained model, and directly converts the trained FP32 model into a fixed-point computing model without any training on the original model. The quantization process can be completed by only adjusting a few hyperparameters, and the process is simple and fast without training. Therefore, this method has been widely used in a large number of device-side and cloud-side deployment scenarios. We recommend that you try the PTQ method to see if it meets the requirements.

diff --git a/docs/toolchain/quantization/1.3_Optimizing_Quantization_Modes.md b/docs/toolchain/quantization/1.3_Optimizing_Quantization_Modes.md

index 2700f25..b1e4818 100644

--- a/docs/toolchain/quantization/1.3_Optimizing_Quantization_Modes.md

+++ b/docs/toolchain/quantization/1.3_Optimizing_Quantization_Modes.md

@@ -112,7 +112,7 @@ bie_path = km.analysis(

@@ -134,7 +134,7 @@ export MIXBW_DEBUG=True